Behavioral Evidence > Self-Reported Evidence

What People Say and What People Do Are Different

Summary: Using a real usability test example, this week’s article shows how teams often give too much weight to what users say after the fact and not enough weight to what actually happened during real-world tasks.

Years ago, I ran a moderated usability test on a complex workflow redesign. The test consisted of a handful of top tasks. One of those tasks could have been described as, “This is the core value of the product.” For this test, the participant was a perfect match for the user segment we wanted to learn more about. They understood the domain, and they used the tool we were testing in their day-to-day work. In other words, everything was on the up-and-up. hahahaha

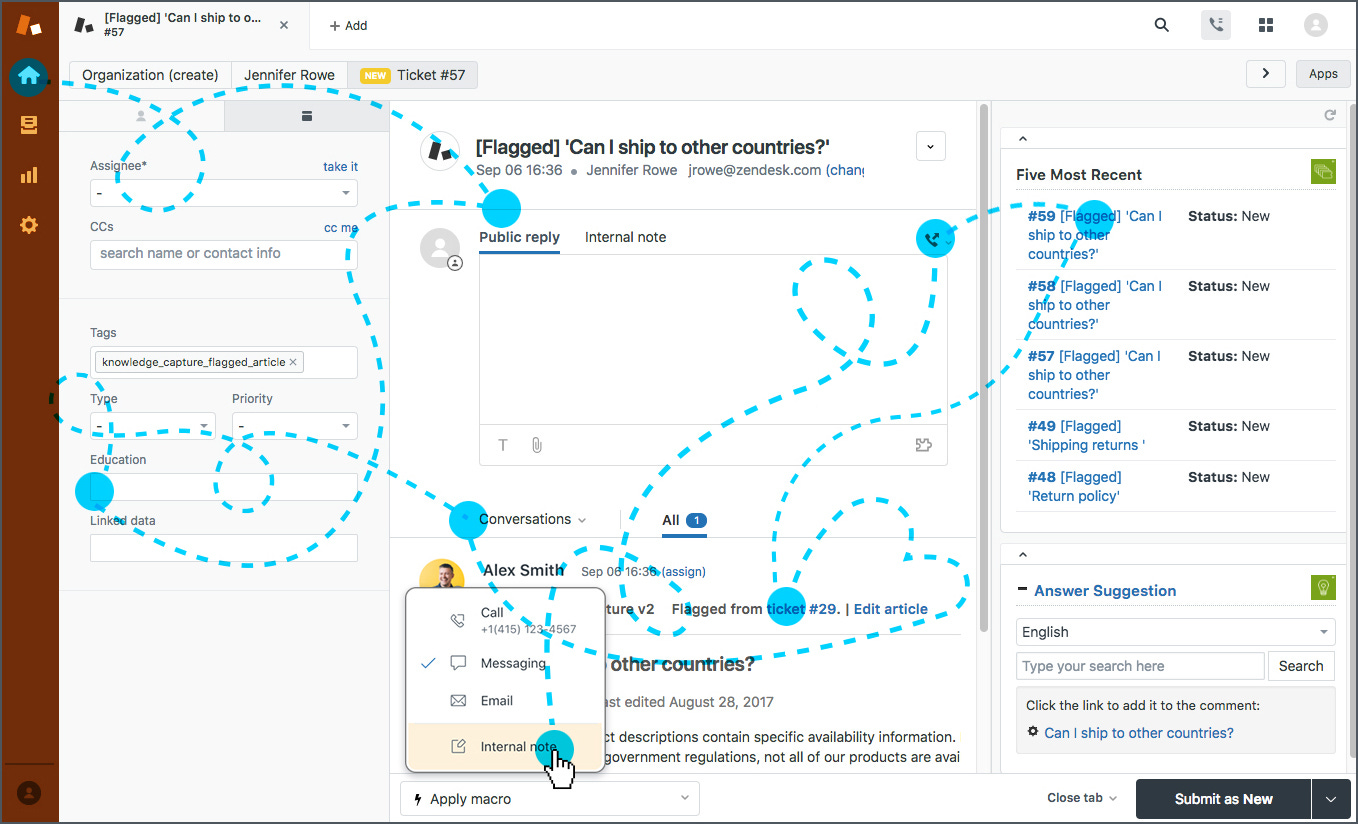

When we reached that one critical task, everything started normally. Then the participant hit the first decision point and hesitated. They clicked into the wrong area, backed out, tried a different path, and started narrating little “wait, what” comments that you only hear when someone is quietly losing confidence. A few minutes later, they were deep into a workaround that looked nothing like the intended path. After some more struggling, the participant asked me, half-jokingly, if the system was supposed to do something that it was not doing. The flow never fully recovered, and they ultimately did not successfully complete the task.

Soon, we had gone through all of the remaining tasks, and I moved into the post-task interview style wrap-up questions. I started that wrap-up section with a pretty simple prompt. “Talk me through how task X went for you.” The participant shrugged and paused as he thought back for a second, then said something like, “Honestly, it was real easy. Pretty intuitive.”

This is the exact phenomenon I’d like to discuss in this week’s article. It is why we must observe what users do and not blindly accept what users say. It is also why eyewitness testimony can be unreliable in court. Most of all, I think this psychological phenomenon is one of the main drivers behind the dilution of user research I see happening in the real world, so I’m hoping this article helps explain the problem and pushes us to start correcting it.

After that usability test I remember sitting there thinking, if that task were truly tied to their job in the real world, the experience would have landed much much differently. Someone whose work depends on completing a task does not casually forget the moment they got blocked right before submission. That person ends up frustrated, escalates to a coworker, searches for documentation, opens a support ticket, or does whatever it takes to get the work done before the deadline hits.

The behavioral record of the session told the full story. Uncertainty, backtracking, and task failure actually happened despite what the participant said at the end. That retrospective perception data sounded nice, but it also contradicted everything we just watched.

And this is where things get uncomfortable. A couple stakeholders latched onto the “real easy and intuitive” quote. The quote fit what they already wanted to believe. It aligned with the story they had been telling themselves about the workflow. It validated their expectations, so it became the main takeaway of the study. Meanwhile, the behavioral evidence was treated like a weird anomaly and was largely dismissed. That kind of misunderstanding is the entire point of this article.

Teams keep giving way too much weight to anecdotal and retrospective feedback, especially when it supports a preconceived conclusion. Confirmation bias does the rest.

Quick Level Setting

Let’s level-set around something the HCI field has understood for decades. When the question is usability, the best evidence comes from observing behavior during task execution.

The closer you get to real tasks, real constraints, and real consequences, the more trustworthy your data becomes. Full stop.

That statement is not a philosophical preference; it’s a real-world one. Products fail in the real world because people cannot complete tasks, cannot recover from errors, cannot find the right thing, cannot understand what the system is doing, or cannot operate the interface with their access needs. Those are behavioral outcomes.

Perception data can still be useful, but it does not carry the same evidentiary weight when it conflicts with what happened on screen. This is why I keep pushing back on the research culture that treats “user feedback” as one big bucket.

The “User Feedback” Trap

A quote from a discovery interview, a post-task sentiment rating, and a screen recording of task failure are all forms of data. Treating them as interchangeable is where teams start making bad decisions. Here is a simple way that I like to think about it.

Behavioral Evidence answers questions like:

Did the user complete the task

Where did they get stuck

What did they misunderstand

What did they miss entirely

What did they do when the interface did not support them

Perception and retrospection answer a different set of questions:

What the user thinks happened

What they remember happening

What they believe they typically do

How they want to describe themselves and their competence

How they feel about the experience after the fact

Again, user perception data is not meaningless. It just has a very specific failure mode. It can sound confident while being wrong, especially when the experience was confusing, stressful, or unfamiliar. That is exactly what happened in the usability test scenario I described above.

The Misunderstanding

In my experience, this confusion happens for mainly 3 extremely predictable reasons:

Perception data is easy to package.

A clean quote looks good on a slide and travels well in an organization. It can be repeated in meetings without needing much context. A short clip of failure, or a detailed behavioral breakdown, requires people to sit with discomfort and accept that the interface did not work.Perception data often aligns with what stakeholders already want to be true.

That is where confirmation bias turns a single quote into a decision driver. Behavioral evidence is more likely to challenge the story, so it gets treated as noise.A lot of modern “lean UX” culture has trained teams to over-index on lightweight discovery.

Interview-driven discovery creates a false sense of certainty because it produces coherent narratives quickly. Those narratives often become a substitute for validation, and validation is the part that reveals whether the narrative holds up.

This “lean UX” culture reason is the one that drives me the most nuts because the HCI field is not new.

We have decades of research about how to best evaluate interaction quality. Observed behavior has always been the gold standard that actually works.

Usability testing, accessibility evaluation, and task-based observation exist because they force the interface to prove itself under real conditions. Yet a bunch of lightweight, discovery-only, vibe-forward practices have convinced many teams that they can skip the hard part and still claim they are doing user-centered design.

More Practical Standards

The rule of thumb I wish everyone would adopt is this:

If behavioral evidence and perception evidence conflict, behavioral evidence wins. That does not mean you ignore what the person said. It means you interpret what they said through the lens of what happened.

In the example from my usability test session, the useful interpretation is not “the workflow is easy.” The useful interpretation is “the participant is describing how they want the experience to be remembered, while the interface still created a task-ending failure.”

That reframing matters because it changes what the team does next. The team either fixes the workflow or it ships a broken workflow that only feels successful in a meeting. This weighting problem becomes even more damaging when the topic is accessibility.

Accessibility is not primarily a sentiment topic. It is an interaction and capability topic.

The question is not whether the interface feels intuitive. The question is whether someone can operate it with their access needs, complete the task, and recover when something goes wrong. A screen reader does not care about the user’s post-task optimism. If a keyboard-only user gets stuck in part of the interface, that problem is still real, no matter how nicely they describe the experience later. A missing label does not become usable because the design looks clean. We must all remember the fact that behavioral evaluation reveals these failures early, while they are still fixable.

Where UX Went Wrong

I think the confusion is partly cultural. Some product environments reward speed more than truth and reward alignment more than accuracy. Others reward clean, confident narratives more than messy evidence that creates follow-up work. Once that becomes the incentive structure, teams start valuing research outputs that help move decisions along, even when those outputs are built on weaker evidence. It can turn into a “group think” thing.

That is also how stakeholders accidentally train teams to ignore the most important signals. Positive, simple feedback like “users liked it,” “users said it was easy,” or “the design tested well” creates a sense of closure. It makes it feel like the research did its job and the team can move on. Behavioral evidence does the opposite. It surfaces friction, ambiguity, failure points, and uncomfortable obligations.

So even when nobody is trying to be careless, the org slowly drifts toward the kinds of findings that feel good to present and away from the kinds of findings that force change. Over time, that drift becomes culture. And once it becomes culture, the same mistake repeats itself across teams, products, and years.

That is how you end up with an org that can run “discovery” every quarter and still ship workflows users cannot complete. It is also how PM-centric and aesthetic-centric mindsets quietly reshape the research hierarchy until teams start acting like clean UI and positive quotes are enough to prove good UX.

A Real-World Framework

I have found that stakeholders rarely need or want education about our research methods. They need a simple way to keep evidence categories straight.

Here is the framework I use that has worked wonders in orgs of all different shapes and sizes:

Category A: Outcome evidence

This is the stuff that tells you whether the workflow actually worked.

Task completion or failure

Critical errors and where they happen

Backtracking, hesitation, and wrong-path selection

Time on task when it is meaningful

Moments where the user needs help to proceed

Accessibility outcomes like keyboard operability and screen reader comprehension

Category B: Interpretation evidence

This helps explain why the outcome happened.

What the participant believed the system was doing

What they thought a control meant

What goal they were trying to accomplish in that moment

What information they felt was missing

What they expected to happen next

Category C: Preference and sentiment

This helps you understand taste, comfort, confidence, and perceived value.

“I liked it”

“It feels modern”

“I would use this”

“It was intuitive”

Ratings, NPS-style questions, lightweight satisfaction prompts

The mistake seems to happen in most product-level conversations; teams treat Category C as if it can overrule Category A.

Category C can help influence direction and priorities, but Category A is backed by high-confidence research we can rely on. Once stakeholders buy into that idea, they will usually agree that Category C data can no longer be used to claim success when Category A proves failure. Once you explain it this way, it becomes much much harder for someone to wave a nice quote around like it is proof.

Reframing in the Real World

To me, this reframing is the part that matters most in practice. When a stakeholder grabs onto a quote like “it felt easy” or “that was intuitive,” you need language that calmly redirects the conversation back to the stronger evidence without turning it into a personal disagreement.

The clearest move is to separate perception from outcome. You can say something like, “That comment is useful as perception data, but the task still did not complete, so we should treat this as a usability issue,” or “The quote is worth noting, but it cannot be our main takeaway because the behavioral record shows the workflow actually broke down while in use.” Framing it this way keeps the discussion grounded in evidence quality rather than in who is right.

I think this becomes even easier to explain when you connect it to accessibility. Accessibility already forces teams to be more precise about what counts as valid evidence. A keyboard-only user getting stuck in part of the interface is a behavioral outcome that no one can ignore. Nobody would seriously argue that a participant saying “it felt accessible enough” is strong proof that the experience is actually accessible.

Usability should be treated the same way. A participant saying a flow felt easy or intuitive does not override the fact that they got stuck, failed the task, or needed help to continue. The same weighting rule applies in both cases. When perception and behavior conflict, behavior is the evidence that should carry more weight.

Conclusion

My hope for the future is that teams stop treating behavioral research like an optional upgrade. Behavior is the main evidence type for usability, accessibility, and workflow success, and I’d love to see perception treated as supporting evidence that helps teams interpret and prioritize. When the two conflict, that conflict is itself a finding, and it usually points to a social dynamic, a memory effect, or a mismatch between conceptual clarity and operational execution.

If you are a user researcher, I’m begging you to be more aware of this and to protect this distinction carefully. Do that by making it explicit in your readouts. Label data with the categories I described above, and do not let a quote become the takeaway when the behavioral evidence says something else.

If you are a stakeholder, please resist the temptation to grab the quote that makes you feel better. The job is not to feel better. It’s to ship products that work for real users doing real tasks in the real world. At the end of the day, your users will either complete the tasks they need your products for, or they’ll find a competitor who can do what you cannot.

Have you ever seen this misinterpretation of perception data in your work? If so, comment here or DM me with your stories. I’d love to know whether I’m the only one seeing this. Thanks for reading!