Weaponized Institutional Knowledge in UX

A Hot Take Rant

Summary: Institutional knowledge is often treated as a strength, but in practice, it is one of the most common ways UX best practices get ignored, undermined, or shut down. This post breaks down how the phrase "but our users are different" becomes a weapon and explains why it's time to shift the burden of proof back where it belongs.

Institutional knowledge has become a kind of sacred cow in many organizations. It's praised. Protected. Framed as a strength. And on paper, sure, it makes sense. Knowledge that lives in an organization, built up over time through experience, is valuable. I'm not arguing with that.

What I am saying is that in the real world, institutional knowledge often gets weaponized, especially against us UX researchers. It's used as a conversation ender, not a conversation starter. A justification instead of a hypothesis. A way to dismiss UX fundamentals instead of engaging with them.

And while some might call that an exaggeration, I've seen it enough times across enough companies to know that it's a real pattern. Not an isolated one. And it's quietly undermining UX work from the inside out.

This post is about what happens when experience in an organization is used not to build up UX, but to shut it down.

The 90/10 Rule of UX Best Practices

UX best practices aren't arbitrary. They come from decades of research and behavioral studies conducted with real users in controlled environments. They're backed by industry-wide data on what actually works. And most importantly, they're validated through repeated testing.

The Nielsen Norman Group conducted a macro analysis of their best practice recommendations not too long ago. They found that following their published foundational usability guidelines works out in the real world ~90% of the time.

That’s right. About 90% of the time, simply following a UX best practice results in a design that meets rigorous usability standards in the real world.

That leaves only about 10% of cases where breaking a best practice might result in a better user experience. Not will. Not likely to. Might.

So here's my question: If there's only a 10% chance that breaking a UX best practice is going to work, why do so many product teams ignore best practices by default?

Why do I still see:

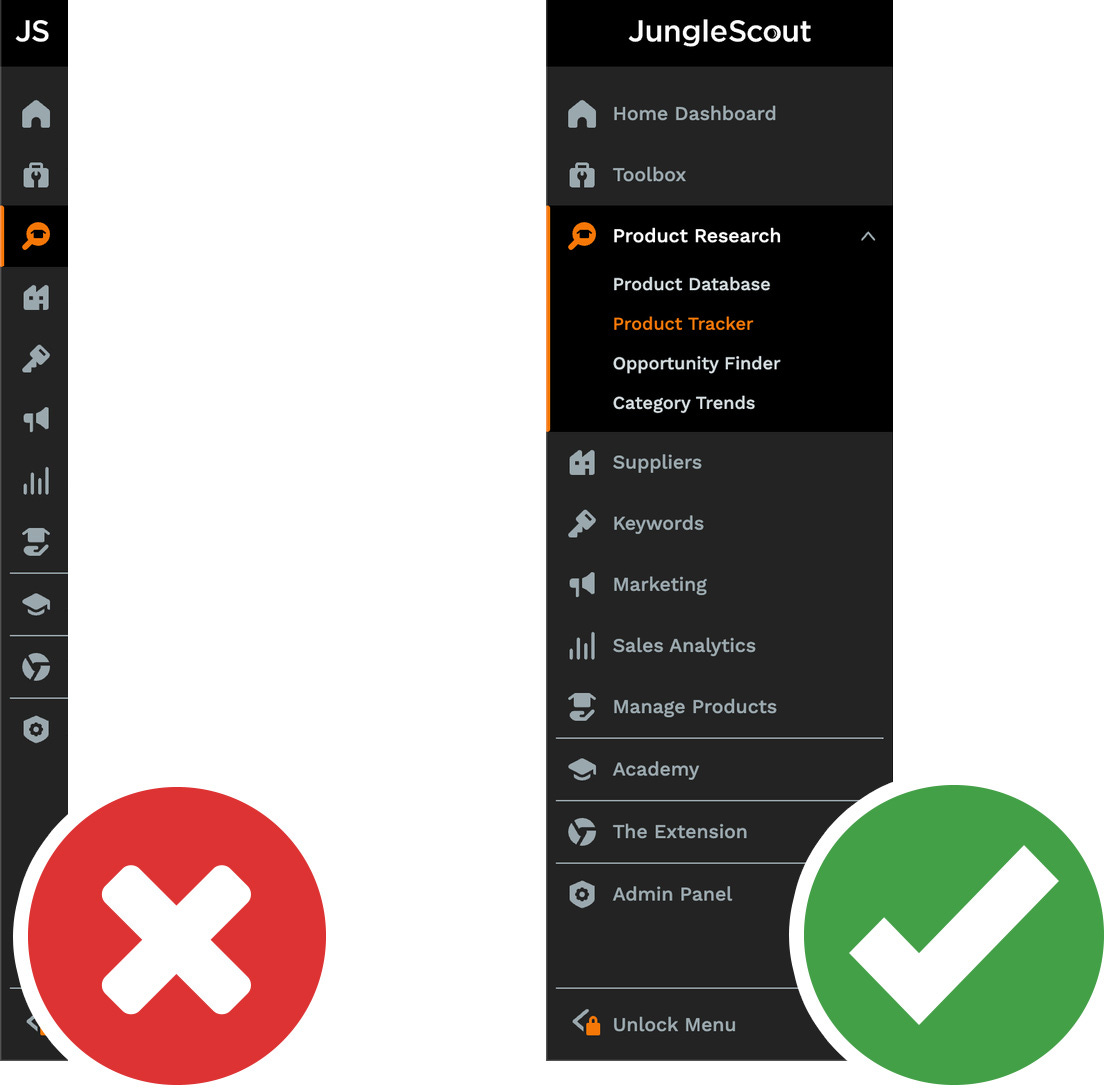

Icon-only navigation with no labels

Hamburger menus in large desktop viewports

Critical controls that only appear on hover

Unprompted UI animations

Scrolljacking and cursor hijacking

Forms that don't explain errors

Patterns that were proven to fail 30 years ago still being used today

You would think we'd all be optimizing for the 90% case right? But instead, I see teams confidently rolling the dice. And I'm not talking about scrappy startups with zero UX hires. I mean enterprise-level organizations with “mature” UX teams.

But Our Users Are Different…

This is the part that gets under my skin.

Many times when I raise a UX best practice concern, I hear something like this:

"But our users are different."

Let's unpack that.

If you've worked in UX long enough, you've probably heard some variation of this phrase. It sounds harmless at first. Like a useful reminder to be context-aware. But more often than not, it's used to shut down discussion. It becomes a safety mechanism for poor UX design choices. A tool to silence pushback.

Let me give you a few real examples I've heard from experienced UXers and stakeholders in actual meetings:

"But our users have memorized our unique icon language. They don't need labels. Labels would just clutter things."

"But our users are super technical people. They like reading a lot, and see jargon as a sign of professionalism."

"But our users don't like scrolling. They want everything above the fold. If we make them scroll, they'll miss it."

"But our users don't include anyone with accessibility needs. We've never had a complaint, so that's not a concern for us."

These aren't strawman arguments. These are actual things I've been told when pointing out issues that clearly go against widely accepted UX principles.

And here's the kicker. In my almost 20-year career, in every single case except one that I can remember, when we were allowed to run user testing to validate someone’s best practice counterclaim, the results aligned with best practices, not the institutional knowledge.

Take icon-only buttons. I've raised this issue in 7 different companies. All of them were using vertical navigation bars with icon-only buttons on the left-hand side of their apps. Every time, I was told, "our users are different, they know what these icons mean." Every time, we ran a study. Every time, the test participants fumbled. They clicked the wrong things. They got frustrated. They hovered back and forth trying to figure it out. They got it wrong.

These weren't outlier users. These weren't new users. In some cases, they were power users. It didn't matter. Without labels, the icons still caused confusion. Just like the foundational research conducted decades ago predicted they would.

So when someone says "but our users are different," my default response has changed. I used to think it was an opportunity for curiosity. A chance to dig deeper, test the edge case, see if we'd found a new pattern. But now, I've learned to be much much more cautious.

Because nine times out of ten, it's not a hypothesis. It's a mic drop moment for the person saying it. A way to claim authority without showing evidence.

And here's why that's a problem. In healthy UX cultures, claims about user behavior get tested. In low-maturity orgs, they get defended. The person who's been there the longest is often seen as the expert, even if their knowledge is purely anecdotal.

That's how institutional knowledge becomes weaponized. It's not used to help us design better. It's used to shut down design conversations entirely.

When "Curiosity" Isn't Welcome

In the early years of my UX career, I took comments like "our users are different" as an opening. I thought this meant someone had seen something interesting. Maybe they had data. Maybe there was an insight I didn't know yet.

So I'd lean in. I'd say something like, "That's great. I'd love to learn more. How did we figure that out?"

And instead of excitement, what I mostly got back was friction. Not disagreement. Not even skepticism. What I got was a sense of territorialism. As if I had questioned not just a UX decision, but someone's rank in the organization.

Suddenly, I wasn't collaborating. I was challenging authority. My simple "I'd love to learn more" turned into a threat. I started hearing things like:

"I've been here for ten years. I think I’d know."

"I work with our users every day. What more do you need?"

"We already know this. It's not worth looking into any further."

That's when I realized these weren't just differences in opinion. They were differences in how we define truth. To me, UX truth comes from observing users. To them, truth came from internal consensus and seniority.

That's why I call it weaponized. Because it's used to stop research. To block validation. To override evidence. In many cases, it's a shortcut to winning an argument with no data at all.

How Institutional Knowledge Hurts UX

Let's look at some of the specific ways institutional knowledge gets misused. Again, these aren't hypothetical. These are things I've seen over and over again in large, well-resourced companies.

1. Shutting Down Research Before It Starts

You propose a usability test to evaluate an updated feature. The response is, "We don't need that. We already know what our users do in this workflow."

This happens even after a usability problem has been observed internally. Even when you have early data suggesting friction. Even when the update underperforms its expected launch adoption rate. The conclusion? We messed up the marketing communications for the update. Wait, what?!

And when you ask how they came to that conclusion, you find out the marketing manager and the PM don’t get along. Hahahaha. Sound familiar?

In this case, instead of being curious, the team doubles down on what they already believe is true. Or even worse, what they simply want to be true. In this all-too-common scenario, the research gets framed as a waste of time, but somehow everyone finds time for debates and scapegoating.

2. Flipping the Burden of Proof

Someone says, "Our users are different." Now it's your job to disprove that.

This is not how science works. This is not how truths are discovered. You can't prove a negative. You can only validate a hypothesis through data. I cannot wrap my brain around why so many smart people completely misunderstand this, but nonetheless, it is a very very common misunderstanding.

The burden of proof should be on the person making the exception to the rule, not on the person following the UX standard.

If we treat best practices as the baseline, which we should, then exceptions should require validation. Not just false confidence.

3. Performing Research With Built-In Bias

I've seen this a lot during one-on-one interviews. A UXer goes into the session with a subject matter expert mindset. They know the product. They know the use case. They think, "If I match the user's language, we can move past the basics and get to the real stuff."

But what ends up happening is that the UXer drives the conversation. They ask leading questions. They explain things that weren't confusing. They provide examples to frame responses. They unconsciously steer the interview toward their preferred outcome.

As far as I can tell, almost none of this is malicious or even conscious behavior. It's just bad research technique. Real insight comes from allowing users to show you how they think, not from feeding them the right answers.

The best UX researchers I know are capable of doing something extremely advanced. They know everything about the product and still walk into every session with a beginner's mindset. They ask dumb questions. They let users lead. They stay quiet even when they know the answer. That's what real expertise looks like.

4. Talking to the Same Users Over and Over Again

Another sign of weaponized institutional knowledge is when a team only does research with the handful of sympathetic users. PMs often call these users "our champions." But what they really are is too familiar. Predictable. Overly knowledgeable of the team's goals.

I once worked with a team that had built an internal user committee. These users had been involved in dozens of design reviews. They knew the product roadmap. They even used UX terms when providing their feedback. These people weren't acting as users anymore. They were now acting as unofficial team members!

So when the design team said, "Our users loved this new prototype," what they really meant was "Our five favorite users said yes.” That's not user-centered. That's self-reinforcing.

Instead, a better practice is to seek new voices every time. If you have a small user base, rotate participants and select them based on task area. Make sure you're talking to people who can still surprise you. A good rule of thumb is that when no user is ever confused or frustrated in your research sessions, you're probably doing it wrong.

5. Research as a Formality

Sometimes, UX teams run studies just to check a box. They bring a polished design to a focus group or user council, make a short pitch, and ask, "Does this make sense?" The group says yes. The team walks away saying, "Research confirmed it."

But there was no learning. No discovery. No critical feedback loop. It was a presentation, not a research study. The activity was designed to build consensus, not to question assumptions. But that's not the purpose of UX research. That’s not research at all. Research is about learning.

6. Institutional Knowledge Bias Is Also Subtle

A lot of the misuse of institutional knowledge is unconscious. Even experienced UXers can fall into the trap. They start assuming they know what the user is going to say. They start solving problems in their head before the user has even described the pain point.

It happens when we interview with a plan to validate, not to listen. It happens when we skip past what feels "obvious" and try to answer edge cases instead of confirming the basics.

It even happens when researchers over-identify with their audience and stop noticing the blind spots in their product. Again, these aren't red flags you should use to judge someone. They’re just part of human nature. But they're dangerous all the same. When curiosity disappears, so does good UX.

Conclusion

Here's my position. And I honestly don't think it's controversial.

If there's a strong, research-backed UX best practice, we should start there. And if someone wants to break that best practice, they need to prove that it works better for their users. They need to show it with behavioral data. Not with anecdotes. Not with internal quotes. Not with "I've been here long enough to know."

The burden should not be on researchers to disprove unsupported claims. That's not science. That's status.

Institutional knowledge is fine, as long as it lives alongside user-centered data. But when it replaces it? When it overrides it? When it's used to stop research, stall improvements, and win arguments? That's when it stops being useful. That's when it becomes weaponized.

Thanks for reading. If this resonates or if you've run into similar situations, I'd love to hear from you. Let's keep pushing for better UX, even when it's unpopular.

Oh, and one last thing: no, your users are probably not that different from the rest of the world.

Enjoyed this article. Is there a reference to the NNG study? Couldn’t locate it on my own